Enterprise-Grade Capabilities

Built with production-ready architecture for clinical deployment and research excellence

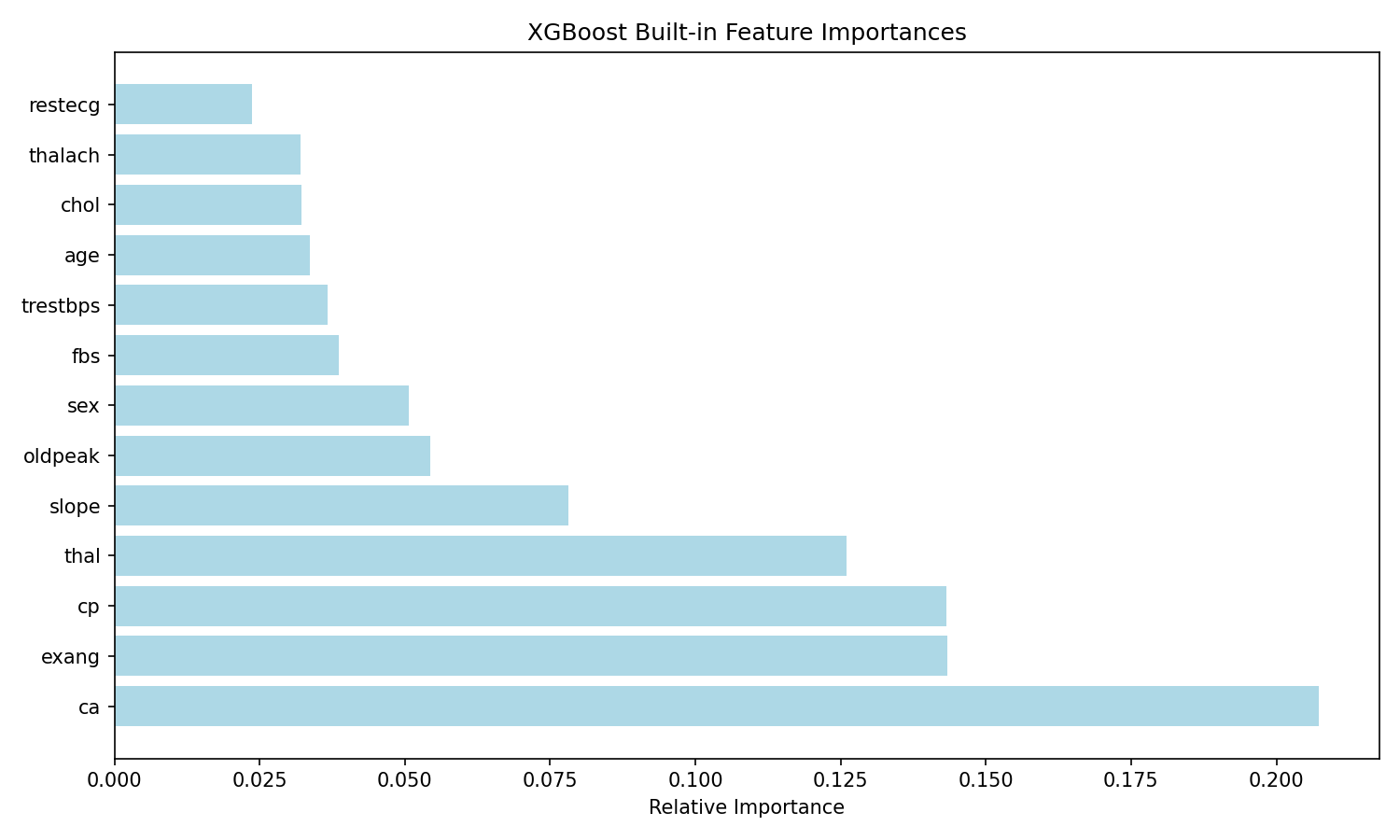

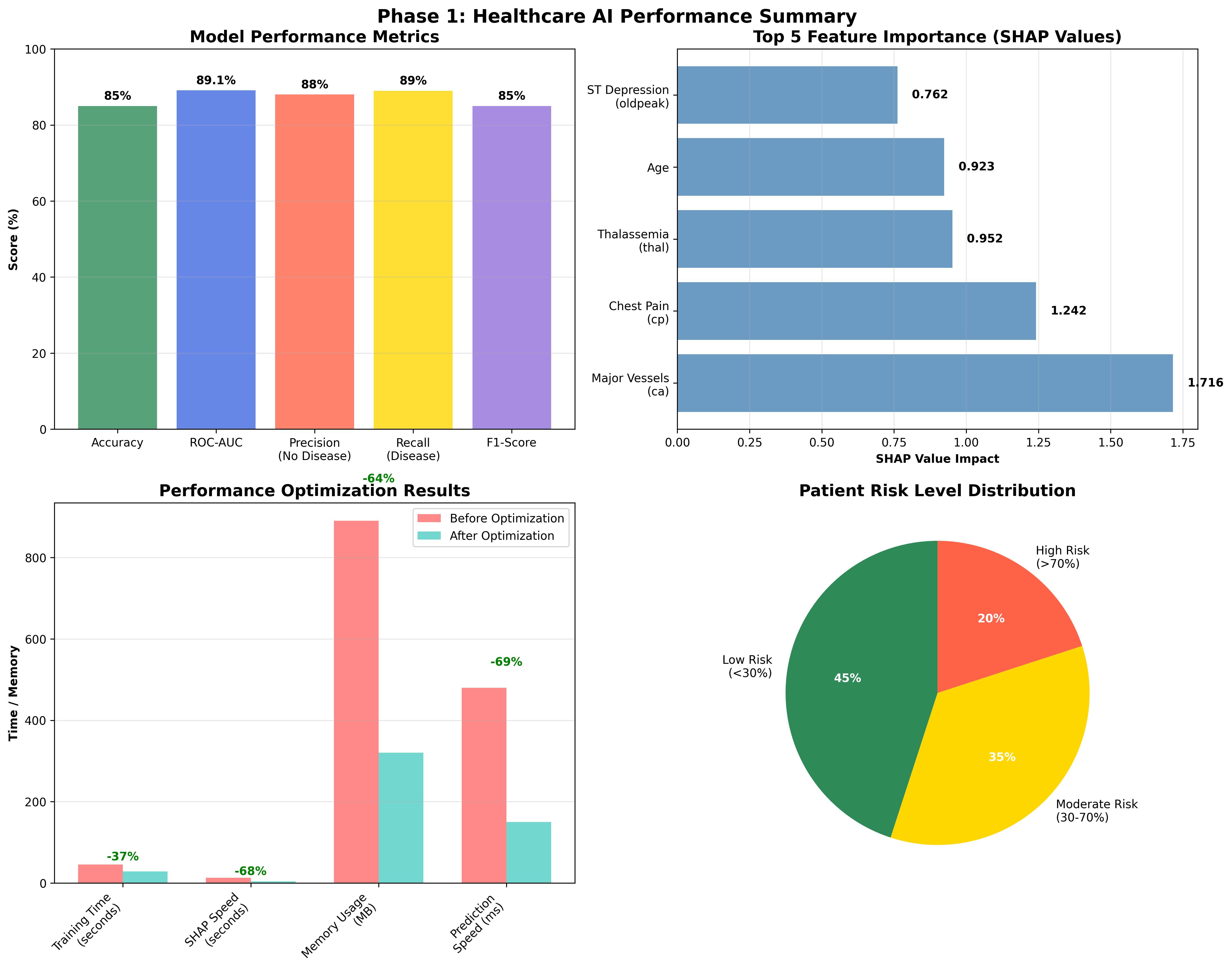

Dual Explainability (SHAP + LIME)

Every prediction generates both global and local explanations, giving clinicians feature-level insight into risk factors driving each diagnosis. SHAP provides model-wide interpretability while LIME delivers patient-specific reasoning.

Predictive Accuracy

Validated performance exceeding clinical benchmarks with held-out test data.

Federated Learning

Train across distributed clinical sites without sharing raw patient data.

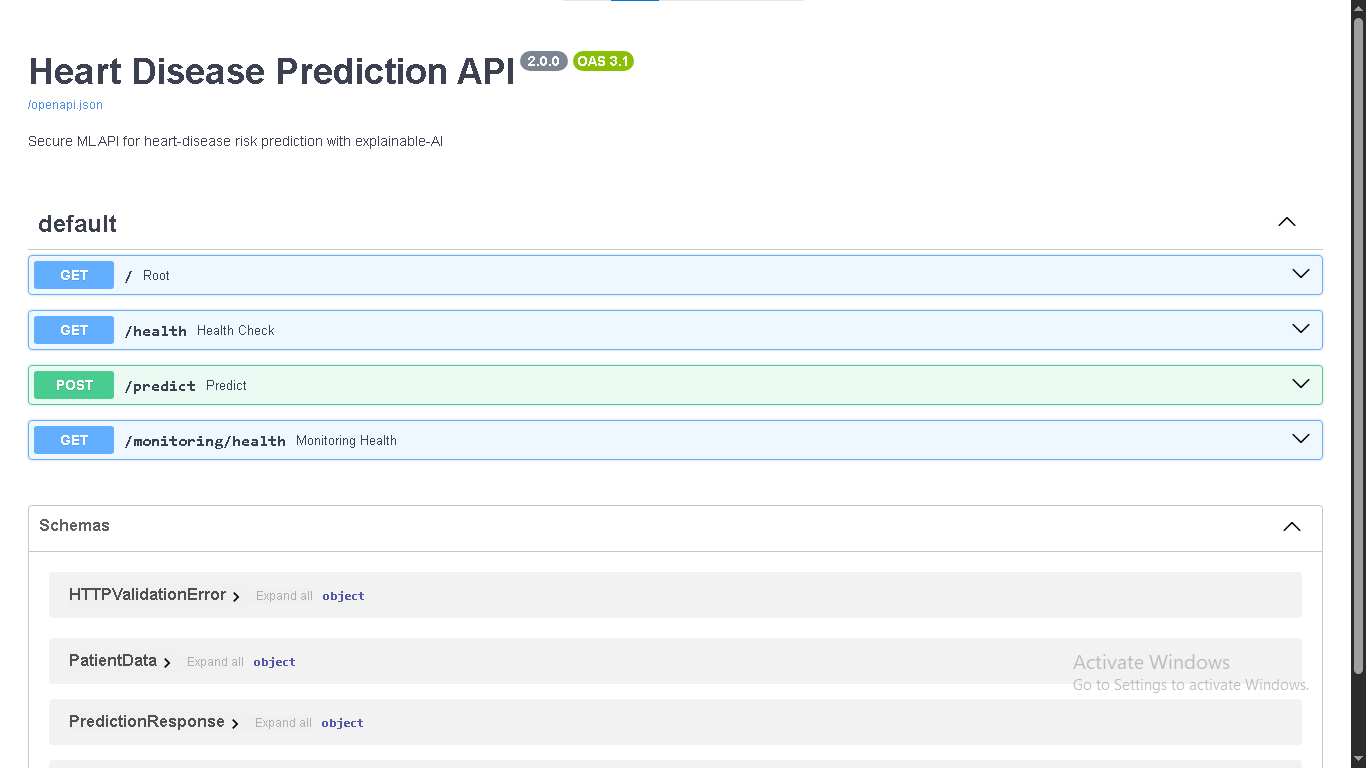

Production FastAPI Backend

REST API with sub-100ms inference latency, ready for EHR system integration and clinical workflow embedding. Auto-generated Swagger documentation included.

Enterprise MLOps via MLflow

Full experiment tracking, model versioning, artifact logging, and reproducible training pipelines. Monitor every aspect of your model lifecycle.

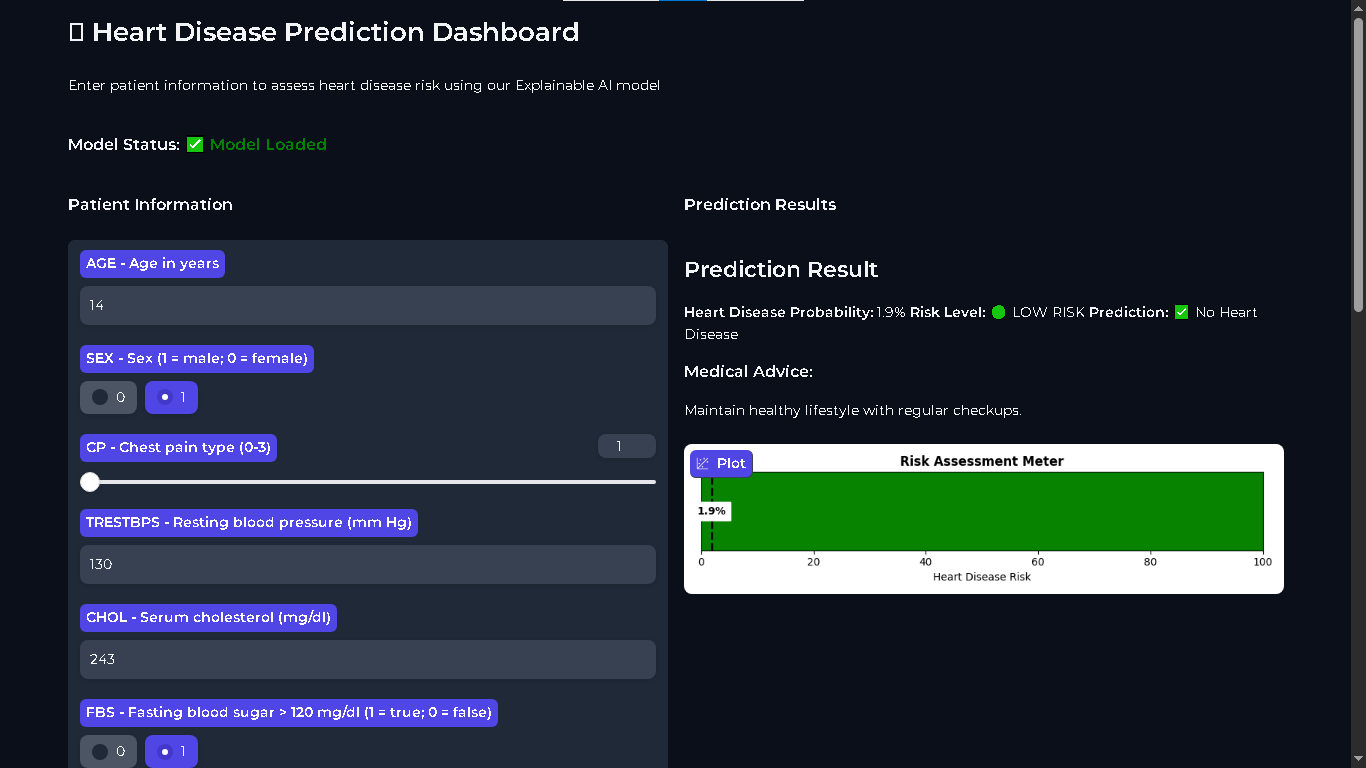

Interactive Gradio Dashboard

A professional, no-code UI for clinicians to input patient data and receive annotated risk assessments in real time.

Cloud-Ready Deployment

One-command deploy to Hugging Face Spaces, Streamlit Cloud, or Render — no DevOps expertise required. Docker support included for containerized deployments.